Scraping Without Getting Stopped: A Practical Throughput Playbook For Product and Marketing Teams

Web acquisition succeeds when it looks, measures, and behaves like normal traffic. That is harder than it sounds. Automated traffic now accounts for about one third of web visits, with malicious bots making up a large share, so defensive systems are tuned to be suspicious by default. If you want consistent access to product pages, classifieds, reviews, or SERP features that feed business and marketing decisions, you need a plan grounded in facts, not guesswork.

The good news is that a few measurable choices around transport, identity, and content scope can lift success rates while cutting waste. The following approach is what I use to ship scrapers that hold up in production across months, not days.

Build a throughput budget before you add threads

Most scraping failures come from starving the network or tripping soft limits, not from code bugs. Start by sizing how much the target will actually let you move through the pipe.

Measure page weight, not just URL count

The median desktop page on the public web weighs around a couple of megabytes and fires dozens of requests, but your crawler rarely needs any of that except the HTML. Fetching only HTML typically cuts transferred bytes by more than 90 percent compared with a full page load. Favor server-side DOM parsing over headless browsers wherever possible, and disable image, stylesheet, and font retrieval at the client. You reduce bandwidth, shrink your visible footprint, and leave room for graceful retries.

Use connection reuse and modern transport

TLS 1.3 completes in one round trip, and HTTP/2 multiplexes requests over a single connection. That combination lowers handshake overhead and flattens latency spikes that look like sudden bursts to rate limiters. Keep connections warm, respect server hints like Retry-After, and cap concurrent requests per origin to a number a human session could plausibly generate.

Reduce false positives in anti-bot systems

Scrapers often get flagged not because of what they fetch, but because of how they present themselves on the wire.

Stability beats frantic rotation

There are only about 4.3 billion IPv4 addresses, while the online population is well above five billion users, which means heavy use of shared addressing. Many sites score traffic by session longevity, cookie reuse, and consistency across TLS and HTTP fingerprints. Hold state. Reuse cookies. Keep a session alive long enough to look normal, then retire it. Rotate subnets and user agents on sensible boundaries such as account, search term, or region, not on every request.

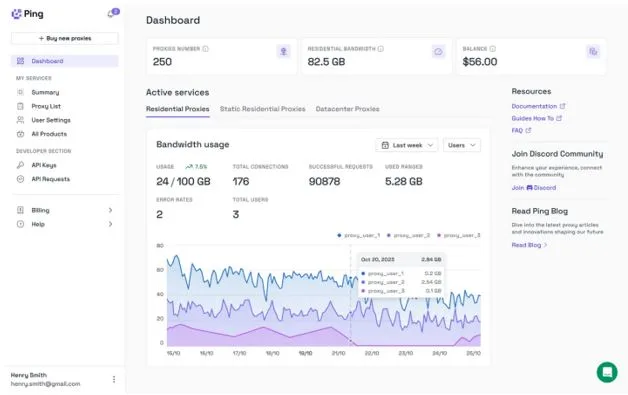

Choose proxy types by job, not habit

Residential IPs are versatile but slower and costlier per successful page. Data-center IPs are faster, cheaper, and excellent for static or lightly dynamic content when paired with steady sessions and realistic pacing. If speed and predictability are the bottleneck, a well-managed pool of datacenter proxies can lift throughput while keeping block rates low. Match exit geography to the content’s audience to avoid suspicious cross-border access patterns.

Make freshness cheaper than first-time fetches

Re-crawls dominate mature pipelines. Optimizing for change detection saves money and reduces block pressure.

Lean on conditional requests and content hashes

Track ETag and Last-Modified headers. A 304 Not Modified costs a fraction of a full payload yet confirms freshness. When headers are missing, compute a stable hash of the relevant DOM fragment and skip write paths when the hash is unchanged. This shifts bandwidth from redundant transfers to genuinely new pages.

Crawl the web the way the site suggests

Respect robots directives and prioritize discovery sources the publisher provides. Sitemaps, category listings, and pagination patterns create predictable paths that are less likely to trigger anomaly detectors than randomized deep links. Predictability that mirrors normal use is protective.

Instrument outcomes, not just errors

You cannot tune what you cannot see. Success in scraping is a quality and yield problem, not just a transport problem.

Track block signals as primary KPIs

Monitor soft and hard indicators separately. Soft signals include sudden shifts to lightweight HTML, missing key selectors, or splash pages that render fine but lack data. Hard signals are 403, 429, or forced interstitials. Keep rolling baselines by origin and by exit network. A rising 429 rate with normal median TTFB invites slower pacing, while a jump in 403s after a client update points to fingerprint drift.

Validate data at the edge

Enforce schema and range checks before storage. Price fields should parse as numbers within sane bounds, dates should normalize, and required selectors must be present. Reject early, log context, and quarantine the session that produced the anomaly instead of poisoning downstream analytics. Clean inputs let marketing and product trust the feed without extra reconciliation work.

Scraping at scale is a systems problem that rewards restraint and measurement. Move fewer bytes, reuse more state, prefer steady sessions over noisy rotation, and let transport and validation do the heavy lifting. Done well, these habits raise acquisition reliability, lower cost per row, and give your business the confidence to plan around data rather than work around it.