Why Faster Video Production Now Depends on Workflow, Not Just Models

| Core angle: Teams do not struggle because there are too few AI video tools. They struggle because disconnected tools create slow handoffs, inconsistent outputs, and unclear costs. |

There is no shortage of new AI video tools. Every month seems to bring another model, another demo, and another promise of faster creation. Yet many teams still feel slow. They spend too much time moving between tools, rewriting prompts, regenerating scenes, and stitching everything together by hand. The problem is no longer access to generation. The problem is workflow.

That shift matters because modern video production is not just about getting one impressive output. Businesses need repeatable ways to create product clips, social ads, explainers, short-form content, and campaign variations without starting from zero each time. When the workflow is weak, even good models produce messy operations. When the workflow is strong, the same models become far more useful.

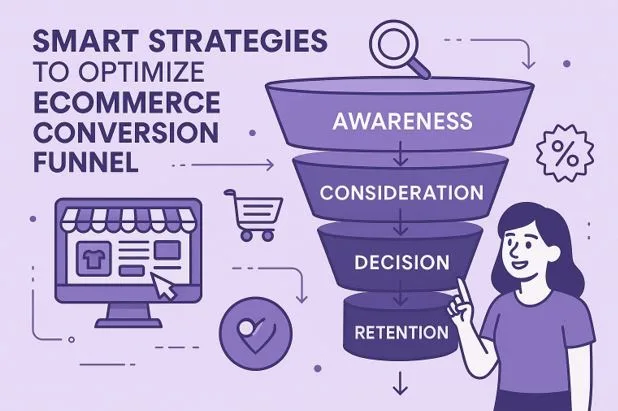

A practical workflow starts by treating video creation as a system with stages rather than a single generation step. Teams usually begin with a concept, then create source visuals, then animate them, then refine timing, framing, and format for the destination channel. If each of those steps happens in a separate environment, production slows down fast. Files get lost, prompts drift, version control becomes messy, and nobody is fully sure which model or settings created the best result.

Why disconnected video creation stacks break down

A fragmented stack often looks manageable at first. One tool handles image generation. Another animates still frames. A third handles cleanup or edits. Then somebody exports assets into a separate editor, resizes for vertical and square formats, and manually documents what worked. This is tolerable for one experiment. It becomes a bottleneck when a team needs ten versions by Friday.

The hidden cost is not only subscription overlap. It is the repeated context switching. Every switch forces the creator to reframe intent. Camera motion choices, aspect ratio, prompt phrasing, and brand consistency all become harder to maintain when the workflow is split across multiple products. The result is uneven quality and slower turnaround, even when each standalone tool is technically strong.

What a better workflow looks like

A stronger process usually has four parts: planning, asset generation, motion generation, and output adaptation. In the best setups, those steps are connected closely enough that creators can move from concept to publishable deliverables without rebuilding the project context every time. That is one reason platforms positioned around an ai video generator workflow are attracting more attention. Instead of forcing users to jump between disconnected steps, they make it easier to move from still image to motion, test multiple approaches, and export for the channels that actually matter.

The planning stage remains human. Teams still need to decide the audience, the offer, the emotional tone, and the action they want viewers to take. AI works best when that intent is clear. Once that foundation is in place, the generation layer becomes much more efficient. Source visuals can be created or refined, then turned into short-form motion with controlled pacing, camera movement, and scene energy.

The next improvement comes from format readiness. A useful production workflow does not stop at one horizontal export. It should support the real destinations where content is consumed, whether that means a vertical clip for reels, a square cut for paid social, or a landscape version for a landing page. This is where workflow quality often matters more than raw model novelty.

Why model access still matters

Workflow does not replace model quality. It makes model quality more usable. Different generators are better at different jobs. Some are stronger at photoreal motion. Some are better for stylized sequences. Some handle camera movement more convincingly. Teams that can compare outputs and route projects more intentionally usually get better creative results than teams locked into one model for every task.

This is where a broader platform such as Cliprise can be useful in practice. Instead of thinking only in terms of a single generation engine, teams can work inside a broader environment built around AI image, video, and voice creation with multiple model options and a more centralized production flow.

That does not mean every project needs complexity. In fact, the point of a good workflow is to reduce unnecessary complexity. The right stack should help creators iterate quickly at the beginning, then move into more deliberate outputs once the best direction is clear. Fast concept generation, clearer model choice, and easier export paths create compounding gains over time.

The business case for workflow-first video creation

For brands, agencies, and in-house teams, speed is only one part of the value. Consistency matters just as much. If two people on the same team create assets in completely different systems, the output quality can vary wildly. A workflow-first setup reduces that variability by making it easier to standardize formats, prompts, revision loops, and approval paths.

Cost visibility also improves. When teams understand where generation, iteration, and final rendering happen, it becomes easier to estimate output cost per asset and choose the right plan for expected volume. A transparent pricing page helps here because creative teams do not just need features. They need predictable economics.

This is especially important as AI video moves from experimentation into regular production. Once content is being created weekly or daily, decision-makers start asking better questions. How many usable assets can we make from one campaign concept? Which model gives the best result for this format? How many iterations are normal before final output? A workflow-driven setup makes those answers easier to track.

Where this trend is heading

The market will keep adding new models, but the winners for many real teams may be the platforms that reduce operational friction. The future is not just better generation. It is better orchestration around generation. That means faster movement from prompt to publishable asset, less rework between tools, and more reliable creative output across formats and campaigns.

For creators and marketers, the practical takeaway is simple. Do not evaluate AI video only by the flashiest demo. Evaluate it by how quickly your team can turn an idea into multiple usable assets with consistent quality. That is the test that matters in production. In 2026, the best video systems are not just powerful. They are organized.