SERP API vs Traditional Web Scraping Which Is Better for SEO Data Extraction?

If you are extracting SEO data at any meaningful scale, you have likely faced this question: should you build your own web scraper or use a SERP API? Both approaches can get the job done, but they come with very different trade-offs in cost, reliability, and long-term maintenance. This article breaks down both options so you can make the right call for your use case.

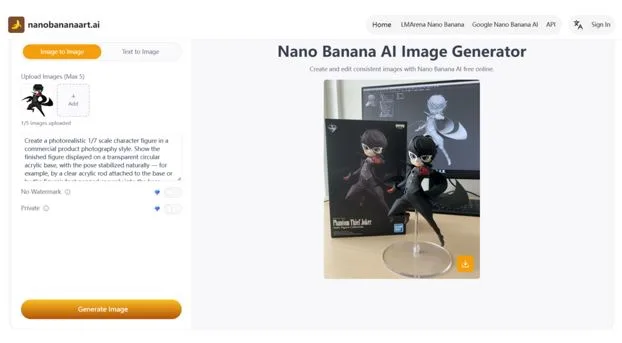

What Is a SERP API?

A SERP API (Search Engine Results Page API) is a managed service that extracts search result data on your behalf. Instead of building a scraper, managing proxies, and solving CAPTCHAs yourself, you send an API request and receive clean, structured JSON in return. The provider handles all the complexity of rotating IPs, parsing HTML, and adapting to Google layout changes behind the scenes.

What Is Traditional Web Scraping?

Traditional web scraping is a DIY approach. You write code using tools like Python with BeautifulSoup or Scrapy, or browser automation frameworks like Playwright or Selenium. You configure proxies, set custom headers, handle rate limits, and write custom parsers to extract the data you need. It gives you full control, but it also places the entire operational burden on your team.

Quick Comparison at a Glance

| Factor | SERP API | Traditional Web Scraping |

| Setup Time | Fast — one API call | Slow — custom build required |

| Maintenance | Low — provider handles updates | High — breaks with layout changes |

| Scalability | Easily handles large volumes | Harder to scale reliably |

| Cost | Predictable subscription | Hidden infra & developer costs |

| Accuracy | Structured JSON output | Depends on your parser quality |

| Anti-bot Handling | Built-in by provider | You manage it yourself |

| Best For | SEO teams, agencies, SaaS | Custom or one-off scraping tasks |

Setup and Development Time

With traditional scraping, getting started means setting up a browser automation environment, writing CSS or XPath selectors, handling redirects, and building retry logic. A basic working scraper can take days to build and test. A SERP API, on the other hand, usually requires just a few lines of code and an API key. Most teams can go from zero to live data in under an hour.

Data Accuracy and Structure

SEO workflows depend on precise, structured data. You need clean fields for ranking position, page title, URL, snippet, People Also Ask results, ads, local pack entries, AI Overviews, and related searches. A SERP API delivers this as a consistent JSON structure. Traditional scraping gives you raw HTML, and your parser must handle every variation. When Google changes its layout, which happens frequently, your selectors break and your data becomes unreliable until you fix them.

Scalability for Large SEO Projects

If you are tracking 10,000 keywords daily, running competitor monitoring, or building a rank tracking tool, scale matters enormously. SERP APIs are designed for high-volume requests and typically offer concurrency, geolocation support, and device targeting out of the box. Scaling a DIY scraper to the same volume requires significant infrastructure investment, including distributed proxies, queue management, and cloud servers.

Cost Comparison: API Pricing vs. Hidden Scraping Costs

Traditional scraping often looks cheaper upfront because the core libraries are free. But the true costs add up quickly: residential proxies, CAPTCHA-solving services, cloud compute, monitoring tools, and most significantly developer time spent on maintenance. SERP API pricing is predictable and subscription-based, making budgeting easier. For most teams, the total cost of ownership for DIY scraping exceeds a SERP API within a few months.

Handling Google Blocks, CAPTCHAs, and Rate Limits

Google aggressively defends against automated access. Blocking, CAPTCHA challenges, and IP bans are constant threats for scrapers. With traditional scraping, you are responsible for everything: proxy rotation, CAPTCHA solving integrations, request throttling, and ban detection. SERP API providers handle all of this internally, maintaining high success rates without you lifting a finger.

When Traditional Web Scraping Makes Sense

DIY scraping is not always the wrong choice. It can be a good fit when:

- You are scraping a small number of pages infrequently

- The target site has a simple, stable HTML structure

- You need highly custom data not covered by any API

- You have a dedicated engineering team with scraping expertise

- The project is experimental and not yet production-critical

When a SERP API Is the Better Choice

A SERP API makes the most sense when:

- You are building a rank tracking or SEO analytics platform

- You need daily or real-time keyword monitoring at scale

- Your team does not have the bandwidth to maintain scrapers

- You need structured data quickly and consistently

- You are tracking SERP features like AI Overviews, ads, or local packs

- You operate across multiple countries or languages

Developer Experience: JSON vs. HTML Parsing

Getting a clean JSON response from a SERP API means your application code stays simple and readable. You access fields directly, like result.organic[0].position or result.peopleAlsoAsk. With HTML scraping, you write and maintain fragile selectors that can silently break after any Google update. Debugging a broken scraper at 2 AM is not how most developers want to spend their time.

Reliability and Long-Term Maintenance

Scraping is not a one-time setup. Google updates its SERP layout regularly, introduces new features like AI Overviews, and changes how certain elements are rendered. Every update is a potential breakage point for your scraper. SERP API providers monitor these changes continuously and update their parsers so you do not have to. Over a 12-month period, this difference in maintenance burden is substantial.

Final Verdict

Traditional scraping is perfectly reasonable for small experiments or simple, low-frequency tasks. But for SEO data extraction at scale, a SERP API is almost always the better choice. It saves setup time, eliminates ongoing maintenance headaches, delivers structured data reliably, and lets your team focus on building value rather than fighting infrastructure.

How Scrapingdog Helps

Scrapingdog’s Google Search API makes SERP data extraction straightforward. With a single API request, you can retrieve organic results, paid ads, People Also Ask questions, AI Overview content, featured snippets, local packs, and more. Whether you are building an SEO dashboard, a rank tracker, or a keyword research tool, Scrapingdog gives you the structured, reliable data you need to move fast.