UndetectedGPT vs AI Detectors: How Accurate Are AI Detection Tools in 2026?

The Growing Debate Around AI-Generated Content

In 2026, artificial intelligence will become deeply embedded in content creation, digital marketing, education, and even journalism. With this rapid adoption, a parallel industry has also expanded at the same speed: AI detection tools. These systems claim to identify whether a piece of text is written by a human or generated by a machine.

At the center of this debate is UndetectedGPT , AI detector, a phrase that reflects how users and developers are increasingly comparing tools designed to bypass detection with systems built to identify AI-written content. The real question is no longer whether AI-generated text exists, but how reliably it can be detected.

As more creators rely on AI writing assistants, the accuracy of detection systems has become a critical concern. But how precise are these tools really in 2026, and can they still be trusted?

What AI Detectors Actually Do

AI detectors are software systems designed to analyze writing patterns and predict whether a text was produced by a human or an AI model. They don’t “know” the truth in a literal sense. Instead, they rely on statistical patterns, probability scoring, and linguistic structure.

Most modern tools evaluate:

- Sentence predictability and fluency

- Repetition of phrases or structures

- Token probability distributions

- Writing consistency and randomness levels

- Stylometric fingerprints

The output is usually a percentage score indicating “likely AI-generated” or “likely human-written.” However, these results are not absolute and often vary between platforms.

How AI Detection Technology Works in 2026

By 2026, AI detection systems have become significantly more advanced than early-generation models. They now use hybrid techniques combining machine learning classifiers with large linguistic datasets.

Modern systems typically work in three layers:

- Pattern Recognition Layer

This layer analyzes sentence structure, vocabulary complexity, and predictability. - Behavioral Language Modeling

Here, detectors compare text against known AI-generated patterns from previous models. - Contextual Consistency Checks

This examines whether ideas flow naturally or feel statistically “generated.”

Despite these improvements, no system has achieved perfect accuracy. This is because AI models themselves have also evolved, producing more human-like text than ever before.

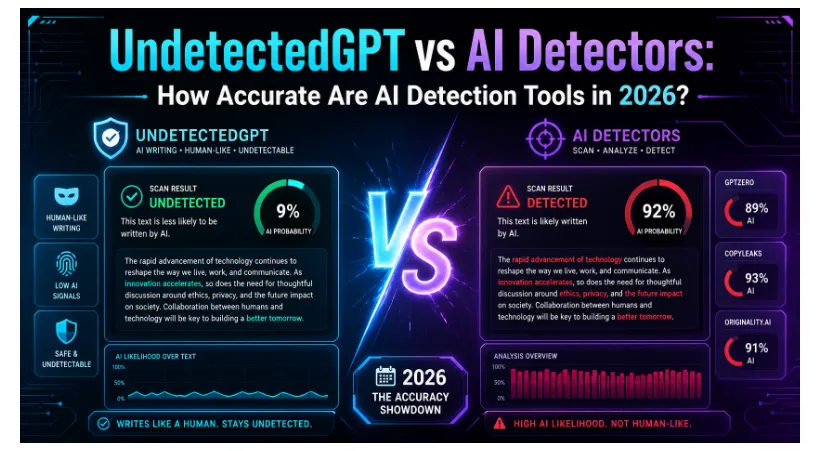

UndetectedGPT vs AI Detection Systems

The ongoing comparison between UndetectedGPT-style rewriting systems and detection tools highlights a growing technological arms race.

On one side, AI detectors aim to identify synthetic writing. On the other side, tools designed to “humanize” or refine AI-generated content attempt to make text indistinguishable from human writing.

The tension between these two systems is what makes detection reliability such a complex issue.

Key differences include:

- AI detectors analyze probability patterns in text

- Humanizing tools adjust tone, structure, and variability

- Detectors rely on past data, while generators evolve rapidly

- One focuses on identification, the other on transformation

This dynamic means detection accuracy is constantly shifting rather than stable.

How Accurate Are AI Detectors Really?

Despite marketing claims, AI detection systems in 2026 are far from perfect. Independent evaluations and real-world testing show mixed results depending on content type, length, and complexity.

In general, accuracy can vary between 60% and 90%, but only under ideal conditions. Short texts, creative writing, and technical content often reduce accuracy significantly.

Common accuracy challenges include:

- False positives on human-written academic or formal text

- False negatives when AI text is heavily edited

- Language model updates outpacing detector updates

- Variations in writing style across regions and industries

This is why even advanced systems struggle with consistency. A text might be flagged as AI-generated by one tool and considered human-written by another.

Why Detection Tools Struggle in Real Scenarios

The core limitation of AI detection lies in the nature of language itself. Human writing is not perfectly random, and AI writing is not perfectly predictable.

Several factors make detection unreliable:

- Human writers often use structured, repetitive patterns

- AI models are trained to mimic human unpredictability

- Short passages lack enough data for accurate analysis

- Mixed content (AI + human editing) confuses classifiers

Even with advanced algorithms, detectors are essentially making probabilistic guesses rather than definitive judgments.

The Role of Tools Like UndetectedGPT in Modern Writing

As detection systems evolve, so do tools that modify AI-generated text to appear more natural. Platforms associated with UndetectedGPT , AI detector help users refine tone, improve flow, and reduce detectable patterns in machine-generated writing.

However, it’s important to understand that these tools do not “break” detection systems. Instead, they adjust linguistic patterns to make content less predictable and more human-like.

Typical transformations include:

- Varying sentence length and rhythm

- Reducing repetitive phrasing

- Adding conversational transitions

- Introducing stylistic inconsistencies that mimic human writing

This creates a more natural reading experience, even if the original draft was AI-assisted.

Real-World Use Cases and Challenges

AI detection is widely used across multiple industries in 2026, but its limitations are also widely recognized.

Common use cases include:

- Academic integrity checks in universities

- Content moderation on publishing platforms

- Recruitment screening for written assessments

- Editorial verification in digital media

However, challenges remain:

- Over-reliance on detection scores without context

- Misclassification affecting students or professionals

- Lack of transparency in scoring methods

- Difficulty in handling multilingual content

Because of these issues, many organizations now use AI detection as a supporting tool rather than a final decision-maker.

Can AI Detectors Keep Up With Advancing Models?

One of the biggest questions in 2026 is whether AI detection technology can keep up with rapidly improving language models. The answer is uncertain.

AI generation models are improving in:

- Context awareness

- Emotional tone adaptation

- Writing diversity

- Human-like imperfection simulation

At the same time, detectors are trying to adapt using:

- Continuous retraining on new datasets

- Ensemble detection methods

- Context-based scoring systems

- Hybrid human-AI review processes

Still, the gap between generation and detection continues to narrow, making the field increasingly competitive and unpredictable.

What This Means for Content Creators in 2026

For writers, marketers, and digital creators, the rise of both AI writing tools and detection systems has created a new reality. Content authenticity is no longer judged solely by origin but by quality, clarity, and usefulness.

Creators today focus on:

- Editing AI-generated drafts for natural tone

- Blending human creativity with AI efficiency

- Avoiding over-reliance on automated output

- Ensuring content feels original and engaging

The presence of tools like UndetectedGPT , AI detector reflects this shift toward hybrid content creation workflows rather than purely automated writing.

Ultimately, the most successful content is not defined by whether it is detected as AI or human, but by how well it connects with readers, solves problems, and delivers value.