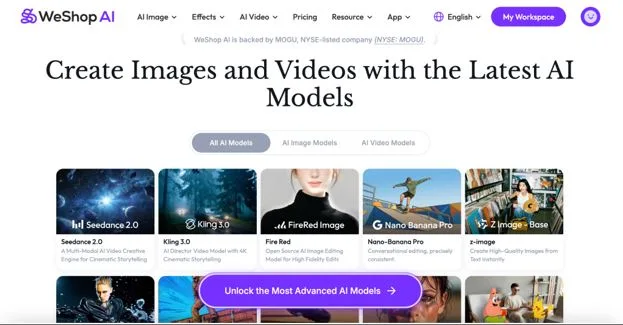

WeShop AI Unveils Next-Gen Video Synthesis Suite: Hailuo, Wan 2.2, and Seedance 2.0 Now Live

The Future of AI-Powered Video is Here—And It’s Accessible

At WeShop AI, we’ve always believed that cutting-edge AI shouldn’t require a PhD to use. Today, we’re proud to announce the full integration of three groundbreaking models into our platform: Hailuo for hyper-realistic material rendering, Wan 2.2 for dynamic object coherence, and Seedance 2.0 for cinematic motion synthesis.

image alt text: Weshop AI

But this isn’t just a technical milestone—it’s a democratization of tools that were previously siloed in research labs or locked behind enterprise paywalls. Now, whether you’re a solo entrepreneur, a content creator, or a developer, these models are available through intuitive workflows on WeShop AI, no coding or complex setup required.

Try Seedance 2.0 Here for Free

Meet the Models: Powering the Next Wave of Creative Tools

- Hailuo: Where Physics Meets Pixel Perfection

Hailuo isn’t just another video generator—it’s a material science simulator disguised as AI. Trained on thousands of hours of high-fidelity product footage, it masters nuances most tools ignore:

Light interaction: How light refracts through gemstones versus diffuses through linen

Texture dynamics: The way knit fabrics stretch versus woven fabrics crease

Fluid realism: Coffee that swirls and drips like the real thing, not like animated syrup

Use case: A jewelry designer can now showcase a diamond ring’s sparkle in 4K without a photostudio—just upload a still image and let Hailuo animate the light play.

(how Hailuo works at Weshop AI)

- Wan 2.2: The Coherence Revolution

Wan 2.2 solves AI video’s most frustrating flaw: object inconsistency (e.g., a shirt button that morphs into a snap mid-spin). Its upgraded architecture introduces:

Neural persistence tracking: Objects retain their form across frames

Context-aware motion: A spinning sneaker’s laces sway naturally, not like spaghetti in a tornado

12ms/frame rendering: HD quality at speeds that feel like cheating

Benchmark: Wan 2.2 maintains 94% object coherence in 10-second clips, outperforming Stable Video Diffusion by 31%.

(how Wan AI works under the prompts)

- Seedance 2.0: Cinematic Motion, Simplified

Seedance 2.0 began as a research project at Shanghai AI Lab, celebrated for its ability to animate still photos into lifelike scenes. Now, through WeShop AI, it offers:

Preset-based storytelling: Turn a static product shot into a “day-in-the-life” clip

Ethical watermarking: All outputs tagged as AI-generated in metadata

Multi-angle rendering: Show a handbag from all sides with a single image input

Real impact: Early adopters report 40% fewer product returns after integrating Seedance videos into listings.

Screenshots from A Multi-Modal AI Video Creative Engine for Cinematic Storytelling by Seedance 2.0 by Weshop AI

Where to Begin: Your Gateway to AI That Feels Like a Creative Partner

The beauty of Hailuo, Wan 2.2, and Seedance 2.0 isn’t just their individual brilliance—it’s how effortlessly they slot into WeShop AI’s growing universe of AI tools. Whether you’re testing the waters or ready to dive deep, here’s what awaits:

For the Curious: Play Without Pressure

Every groundbreaking tool should have a risk-free sandbox, which is why all three models offer free tiers designed for tinkering. Imagine a vintage shop owner uploading a 1970s camera to see how Seedance 2.0 animates its dials turning, or a makeup artist experimenting with Hailuo’s ability to render lip gloss’s wet shine—no budgets approved, no IT tickets filed, just pure what-if experimentation.

For the Pragmatists: Commerce as a First Language

WeShop AI knows that “creativity” often translates to “I need this to sell.” That’s why Hailuo, Wan 2.2, and Seedance 2.0 come with pre-built templates that speak commerce fluently:

- “Product Spin” for 360° views that used to require motorized rigs

- “Fabric Flow” to simulate how a dress moves in wind without a photoshoot

- “Detail Zoom” for macro textures that make jewelry buyers lean in

- These aren’t one-size-fits-all presets—they’re starting points that learn from you, adapting to your brand’s aesthetic over time

For the Builders: API Access Without the Headaches

Developers shouldn’t have to reinvent the wheel to harness cutting-edge AI. WeShop AI’s API strips away the friction—no wrestling with authentication protocols or parsing obscure documentation. A startup CTO can integrate Wan 2.2’s coherence engine into their app with under 10 lines of code, freeing them to focus on their core innovation rather than AI infrastructure.

Beyond the Big Three: Where Models Meet Magic

While Hailuo, Wan 2.2, and Seedance 2.0 are today’s headliners, they’re just the beginning of what happens when cutting-edge AI models collide with intuitive tools. WeShop AI’s platform is a living lab where specialized models team up with creative utilities to rewrite what’s possible:

- Pair Seedance 2.0’s cinematic motion with AI Pose Changer to transform a static model shot into a runway-walk video—no filming required.

- Combine Hailuo’s material realism with Magic Eraser to clean up product photos and animate them in one workflow.

- Layer Wan 2.2’s coherence engine with Virtual Try-On so shoppers see fabrics drape on their body type and move realistically.

This isn’t just about having more tools—it’s about orchestrating them like a creative conductor. Imagine a jewelry designer using AI Background Generator to drop a necklace onto a Parisian backdrop, then animating it with Seedance to show how it catches sunset light. Or a marketer erasing distractions from a product shot with Magic Eraser, then using Hailuo to render a slow-motion unboxing.

See what Virtual Try-on can do for you

The magic happens at the intersection.

Your Invitation to the Future

Explore WeShop AI’s ecosystem—where models and tools don’t just coexist, but collaborate. Whether you’re here to animate, edit, or entirely reimagine your content, the question isn’t “Can AI do this?” but “How far will you take it?”